I admit its been difficult sometimes to work on this project with the current state of AI Discourse. I know that what I do, and what other media artists do in terms of exploration of AI is not the same as what a corporation is trying to do, but I still feel the reverberation of the public opinion.

Either way. I’ve been really enjoying digging through my old photos. I decided in the end to use Clip Interrigator (a weird little art piece in and of itself) and CLIP Prefix Captions to generate text from images. I use these mostly online through CoLab or Huggingface, because the computing resources there are better and I can skip annoying dependency issues locally.

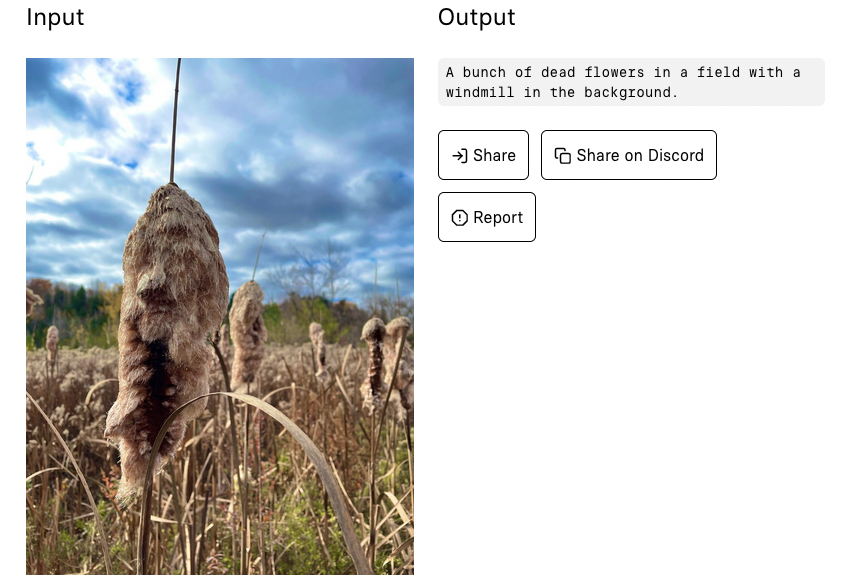

First noticeable thing is how little detail exists in CLIP outputs. Which I find somewhat surprising because generally sites will prompt you to be as detailed as possible when writing captions and alt-text. My guess is it might have something to do w/ the way CLIP is labelled (possibly in rotten ways), or it might have to do w/ the way CLIP works in general. Either way its an image identifying model, and I’ll have to look into its discourse more.

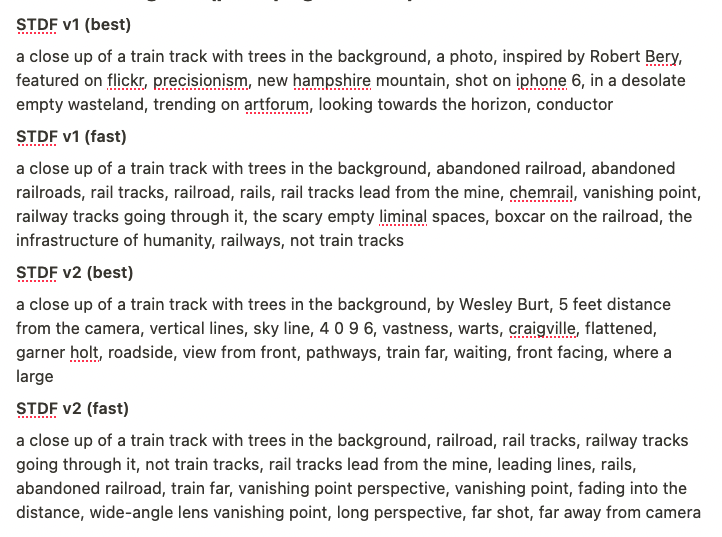

Back to CLIP Interrogator tho, this is a program made by a user named Pharma. It uses two models: CLIP and BLIP and is generally used to generate prompts to feed into models like stable diffusion to generate similar images. So its not what someone might officially use to caption anything, but I find the output to be kind of unpredictable. Here are some examples of it generating prompts for a picture of train tracks.

Tho I admit that sometimes just CLIP Prefix tosses out a weird one now and then. The weirdest being my boots on a pebbled beach. I rather like how short CLIP Prefix is.

I’ve been doing some base generation and also playing w/ a newer Phomeo Printer. I’ll write an update for that in a different post though. I’m considering switching to the Phomeo because its image handling is much better, but that also means altering some of this project to forgo my printer code, which I feel is probably ok. Its pretty old code, and maybe I can use some custom Python for assembled vs printer control.